GTC SC11

Background

| Link | Code | Version | Machine | Date |

|---|---|---|---|---|

| SC paper | Unreleased | Development Version | Keeneland | Nov. 2011 |

In the fall of 2011, several performance studies were conducted on a port of the GTC application of GPUs (full paper).

1/22/2012 - Updated with more details by Chee Wai Lee.

Keeneland was chossen at the site for running these experiments, and is accessible to the developers as well. This example makes use of a user-built version of TAU. Instructions will be added for when we confirm the system-installed version of TAU works.

The GNU compilers were chosen so as to minimize in conflict with CUDArt with does not support other compilers. Because Keeneland's default environment is "PE-intel", the following module switch is probably needed - "module switch PE-intel PE-gnu".

Building TAU

PDT:

cd <path-to-PDT>

./configure -gnu

make && make install

TAU:

cd <path-to-TAU>

./configure -pdt=<path-to-PDT> -pdt_c++=g++ -cuda=/sw/keeneland/cuda/4.0/linux_binary/ -bfd=download -cc=gcc -c++=g++ -mpi -cupti=/sw/keeneland/cuda/4.0/linux_binary/CUDAToolsSDK/CUPTI/ -openmp -opari

make install

Building GTC

Here is the Makefile used. The architecture-specific Makefiles are located in <path-to-GTC>/ARCH. Users are advised to make a copy with a name like "Makefile.keeneland-opt-gnu-tau" as an example. Note that as of this writing (1/22/2012), we will still need users to point to a personalized copy of the CUDA SDK (as evidenced by the SDK_HOME environment setting):

# Define the following to 1 to enable build

BENCH_GTC_MPI = 1

BENCH_CHARGEI_PTHREADS = 0

BENCH_PUSHI_PTHREADS = 0

BENCH_SERIAL = 0

SDK_HOME = /nics/c/home/biersdor/NVIDIA_GPU_Computing_SDK/

CUDA_HOME = /sw/keeneland/cuda/4.0/linux_binary

NVCC_HOME = $(CUDA_HOME)

TAU_MAKEFILE=/nics/c/home/biersdor/tau2/x86_64/lib/Makefile.tau-cupti-mpi-pdt-openmp-opari

TAU_OPTIONS='-optPdtCOpts=-DPDT_PARSE -optVerbose -optShared -optTauSelectFile=select.tau'

TAU_FLAGS=-tau_makefile=$(TAU_MAKEFILE) -tau_options=$(TAU_OPTIONS)

CC = tau_cc.sh $(TAU_FLAGS)

MPICC = tau_cc.sh $(TAU_FLAGS)

NVCC = nvcc

NVCC_FLAGS = -gencode=arch=compute_20,code=\"sm_20,compute_20\" -gencode=arch=compute_20,code=\"sm_20,compute_20\" -m64 --compiler-options '-finstrument-functions -fno-strict-aliasing' -I$(NVCC_HOME)/include -I. -DUNIX -O3 -DGPU_ACCEL=1 -I./ -I$(SDK_HOME)/C/common/inc -I$(SDK_HOME)/shared/inc

NVCC_LINK_FLAGS = -fPIC -m64 -L$(NVCC_HOME)/lib64 -L$(SDK_HOME)/shared/lib -L$(SDK_HOME)/C/lib -L$(SDK_HOME)/C/common/lib/linux -lcudart -lstdc++

CFLAGS = -DUSE_MPI=1 -DGPU_ACCEL=1

CFLAGSOMP = -fopenmp

COPTFLAGS = -std=c99

#CFLAGSOMP = -mp=bind

#COPTFLAGS = -fast

CDEPFLAGS = -MD

CLDFLAGS = -limf $(NVCC_LINK_FLAGS)

MPIDIR =

CFLAGS += -I$(CUDA_HOME)/include/

EXEEXT = _keeneland_opt_gnu_tau_pdt

AR = ar

ARCRFLAGS = cr

RANLIB = ranlib

PDT was chosen to allow for event filtering here is the select file used:

BEGIN_EXCLUDE_LIST double RngStream_RandU01(RngStream) double U01(RngStream) END_EXCLUDE_LIST

The select file should be placed in <path-to-GTC>/src/mpi.

Then build GTC as follows:

cd <path-to-GTC>/src/mpi

make ARCH=keeneland-opt-gnu-tau

If successful, the binary will be available at <path-to-GTC>/src/mpi.

Running GTC

Along with the source code 3 sets of simulation parameters were given: A, B, C (largest). Also a choice of m-cell size: 20 or 96 (96 requires significantly more memory). A, B with m-cell size 20 were used for these performance results.

Performance Results

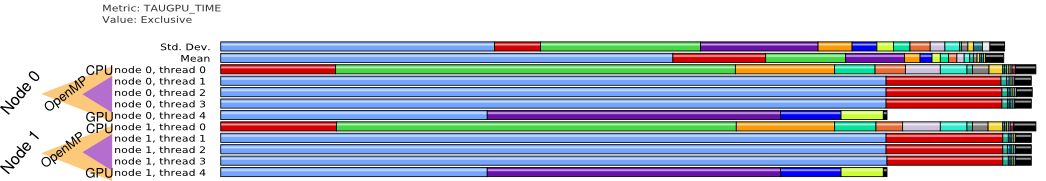

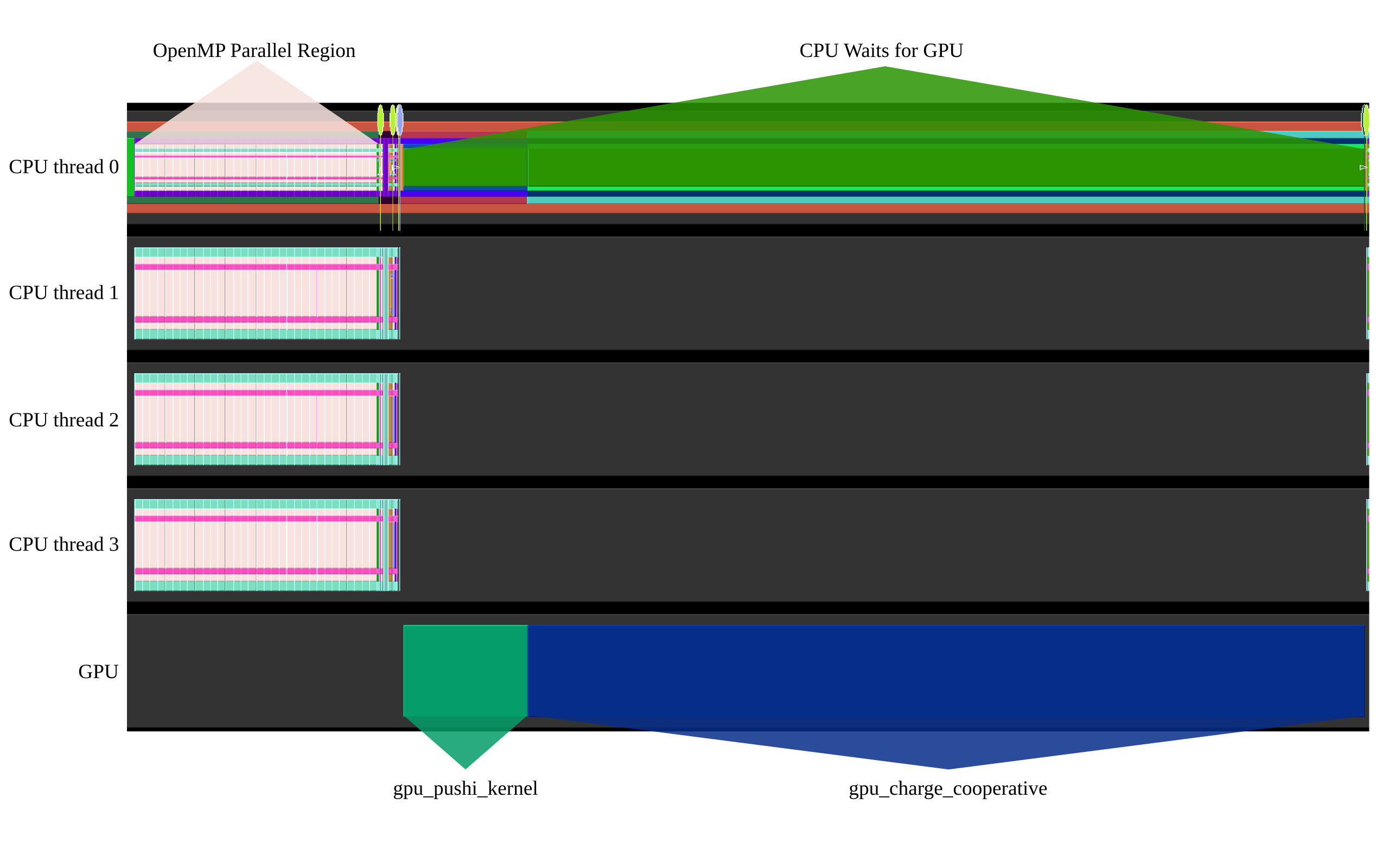

Here are some performance results that show the overall execution model:

Threads instrumented.

This show a trace of a single execution on one MPI process (Multiple nodes/gpus can be utilized as well, performance behavior is similar across each process).

This shows a representative a profile for the GPU.