Difference between revisions of "GTC"

| Line 286: | Line 286: | ||

=== Build === | === Build === | ||

| − | Use this [[ | + | Use this [[Image:Makefile.vesta-tau|Makefile.vesta-tau]] |

export TAU_MAKEFILE=<path to TAU>/bgq/lib/Makefile.tau-bgqtimers-mpi-pdt-openmp-opari | export TAU_MAKEFILE=<path to TAU>/bgq/lib/Makefile.tau-bgqtimers-mpi-pdt-openmp-opari | ||

make ARCH=vesta-tau -j | make ARCH=vesta-tau -j | ||

| − | Run with the attached [[ | + | Run with the attached [[Image:T.txt|T.txt]] (10 iterations instead of 100): |

#!/bin/sh | #!/bin/sh | ||

Latest revision as of 23:00, 21 March 2013

Contents

GTC March 2007 version

Background

| Link | Code Version | Machine | Date |

|---|---|---|---|

| PPPL website | jacquard, oracoke | March 2007 |

I have had success building GTC on both jacquard.nersc.gov and ocracoke.renci.org. There are a number of publications on the GTC application - here are a few:

http://www.iop.org/EJ/article/-search=16435725.1/1742-6596/46/1/010/jpconf6_46_010.pdf http://www.iop.org/EJ/article/-search=16435725.2/1742-6596/16/1/002/jpconf5_16_002.pdf http://www.iop.org/EJ/article/-search=16435725.3/1742-6596/16/1/008/jpconf5_16_008.pdf http://www.iop.org/EJ/article/-search=16435725.4/1742-6596/16/1/001/jpconf5_16_001.pdf

There is a README that comes with GTC. Here is the text of that README file:

12/16/2004 HOW TO BUILD AND RUN GTC (S.Ethier, PPPL)

----------------------------

1. You need to use GNU make to compile GTC. The Makefile contains some

gmake syntax, such as VARIABLE:=

2. The Makefile is fairly straightforward. It runs the command "uname -s"

to determine the OS of the current computer. You will need to change

the Makefile if you use a cross-compiler.

3. The executable is called "gtcmpi". GTC runs in single precision unless

specified during the "make" by using "gmake DOUBLE_PRECISION=y".

See the instructions at the beginning of the Makefile.

4. GTC reads an input file called "gtc.input". The distribution contains

several input files for different problem sizes:

All input files use 10 particles par cell (micell=10)

name # of particles # of grid pts approx. memory size

------ ---------------- --------------- -------------

a125 20,709,760 (20M) 2,076,736 (2M) 5 GB

a250 96,620,160 (100M) 9,674,304 (10M) 22 GB

a500 385,479,040 (385M) 38,572,480 (39M) 80 GB

a750 866,577,280 (866M) 86,694,592 (87M) 180 GB

a1000 1,312,542,080 (1.3B) 131,300,288(131M) 272 GB

For a specific "device size" (fixed number of grid points), one can

change the number of particles per grid cell by increasing or decreasing

the input variable "micell", the number of particles per cell.

5. To run one of the cases, copy the chosen input file into "gtc.input".

6. For the given input files, the maximum number of processors that one

can use for the grid-base 1D domain decomposition is 64. This limit

comes from the mzetamax parameter in the input file. To access more

processors, increase the "npartdom" parameter accordingly. "npartdom"

controls the particle decomposition inside a domain. If, for example,

npartdom=2, the particles in a domain will be split equally between

2 processors. Here are some quick rules:

mzetamax=64, npartdom=1 --> possible no. of processors = 1,2,4,8,16,32,64

mzetamax=64, npartdom=2 --> use 128 processors

mzetamax=64, npartdom=4 --> use 256 processors

etc...

When npartdom is large, it's a good idea to increase the number of

particles per cell by changing the parameter "micell" in gtc.input.

micell=100 is a decent number of particles, although the memory

footprint is larger.

For auto-instrumentation of GTC, it couldn't be easier. On jacquard.nersc.gov, configure tau with the following:

-c++=pathCC -cc=pathcc -fortran=pathscale -useropt=-O3 \ -pdt=/usr/common/homes/k/khuck/pdtoolkit \ -mpiinc=/usr/common/usg/mvapich/pathscale/mvapich-0.9.5-mlx1.0.3/include \ -mpilib=/usr/common/usg/mvapich/pathscale/mvapich-0.9.5-mlx1.0.3/lib \ -mpilibrary=-lmpich#-L/usr/local/ibgd/driver/infinihost/lib64#-lvapi \ -papi=/usr/common/usg/papi/3.1.0 -MULTIPLECOUNTERS \ -useropt=-I/usr/common/usg/mvapich/pathscale/mvapich-0.9.5-mlx1.0.3/include/f90base

add a section to the Linux build area that looks like this (the extra include path finds the MPI module file):

ifeq ($(TAUF90),y)

F90C:=tau_f90.sh -optTauSelectFile=select.tau

CMP:=tau_f90.sh -optTauSelectFile=select.tau

OPT:=-O -freeform -I/usr/common/usg/mvapich/pathscale/mvapich-0.9.5-mlx1.0.3/include/f90base

OPT2:=-O -freeform -I/usr/common/usg/mvapich/pathscale/mvapich-0.9.5-mlx1.0.3/include/f90base

LIB:=

endif

To build the TAU instrumented version, use the following gmake command:

gmake TAUF90=y

For a 64 processor run, copy gtc.input.64p to gtc.input. If you want to use fewer processors, reduce the "mzetamax" to equal the number of processors. For more than 64 processors, see the README file instructions, above.

To run the test with fewer iterations, change the "mstep" parameter in the gtc.input file to something smaller than 100 (10 or 12 is fine).

Here is an example batch submission script to run GTC on 64 nodes of jacquard:

#PBS -l nodes=32:ppn=2,walltime=00:30:00

#PBS -N gtcmpi

#PBS -o gtcmpi.64.out

#PBS -e gtcmpi.64.err

#PBS -q batch

#PBS -A m88

#PBS -V

setenv PATH $HOME/tau2/x86_64/bin:${PATH}

setenv TAU_CALLPATH_DEPTH 500

setenv COUNTER1 GET_TIME_OF_DAY

setenv COUNTER2 PAPI_FP_INS

setenv COUNTER3 PAPI_TOT_CYC

setenv COUNTER4 PAPI_L1_DCM

setenv COUNTER5 PAPI_L1_DCM

cd /u5/khuck/gtc_bench/test/64

mpiexec -np 64 gtcmpi

--Khuck 21:45, 7 March 2007 (PST)

GTC SC 2011 Version (GPU)

Background

| Link | Code | Version | Machine | Date |

|---|---|---|---|---|

| SC paper | Unreleased | Development Version | Keeneland | Nov. 2011 |

In the fall of 2011, several performance studies were conducted on a port of the GTC application of GPUs (full paper).

1/22/2012 - Updated with more details by Chee Wai Lee.

Keeneland was chossen at the site for running these experiments, and is accessible to the developers as well. This example makes use of a user-built version of TAU. Instructions will be added for when we confirm the system-installed version of TAU works.

The GNU compilers were chosen so as to minimize in conflict with CUDArt with does not support other compilers. Because Keeneland's default environment is "PE-intel", the following module switch is probably needed - "module switch PE-intel PE-gnu".

Building TAU

PDT:

cd <path-to-PDT>

./configure -gnu

make && make install

TAU:

cd <path-to-TAU>

./configure -pdt=<path-to-PDT> -pdt_c++=g++ -cuda=/sw/keeneland/cuda/4.0/linux_binary/ -bfd=download -cc=gcc -c++=g++ -mpi -cupti=/sw/keeneland/cuda/4.0/linux_binary/CUDAToolsSDK/CUPTI/ -openmp -opari

make install

Building GTC

Here is the Makefile used. The architecture-specific Makefiles are located in <path-to-GTC>/ARCH. Users are advised to make a copy with a name like "Makefile.keeneland-opt-gnu-tau" as an example. Note that as of this writing (1/22/2012), we will still need users to point to a personalized copy of the CUDA SDK (as evidenced by the SDK_HOME environment setting):

# Define the following to 1 to enable build

BENCH_GTC_MPI = 1

BENCH_CHARGEI_PTHREADS = 0

BENCH_PUSHI_PTHREADS = 0

BENCH_SERIAL = 0

SDK_HOME = /nics/c/home/biersdor/NVIDIA_GPU_Computing_SDK/

CUDA_HOME = /sw/keeneland/cuda/4.0/linux_binary

NVCC_HOME = $(CUDA_HOME)

TAU_MAKEFILE=/nics/c/home/biersdor/tau2/x86_64/lib/Makefile.tau-cupti-mpi-pdt-openmp-opari

TAU_OPTIONS='-optPdtCOpts=-DPDT_PARSE -optVerbose -optShared -optTauSelectFile=select.tau'

TAU_FLAGS=-tau_makefile=$(TAU_MAKEFILE) -tau_options=$(TAU_OPTIONS)

CC = tau_cc.sh $(TAU_FLAGS)

MPICC = tau_cc.sh $(TAU_FLAGS)

NVCC = nvcc

NVCC_FLAGS = -gencode=arch=compute_20,code=\"sm_20,compute_20\" -gencode=arch=compute_20,code=\"sm_20,compute_20\" -m64 --compiler-options '-finstrument-functions -fno-strict-aliasing' -I$(NVCC_HOME)/include -I. -DUNIX -O3 -DGPU_ACCEL=1 -I./ -I$(SDK_HOME)/C/common/inc -I$(SDK_HOME)/shared/inc

NVCC_LINK_FLAGS = -fPIC -m64 -L$(NVCC_HOME)/lib64 -L$(SDK_HOME)/shared/lib -L$(SDK_HOME)/C/lib -L$(SDK_HOME)/C/common/lib/linux -lcudart -lstdc++

CFLAGS = -DUSE_MPI=1 -DGPU_ACCEL=1

CFLAGSOMP = -fopenmp

COPTFLAGS = -std=c99

#CFLAGSOMP = -mp=bind

#COPTFLAGS = -fast

CDEPFLAGS = -MD

CLDFLAGS = -limf $(NVCC_LINK_FLAGS)

MPIDIR =

CFLAGS += -I$(CUDA_HOME)/include/

EXEEXT = _keeneland_opt_gnu_tau_pdt

AR = ar

ARCRFLAGS = cr

RANLIB = ranlib

PDT was chosen to allow for event filtering here is the select file used:

BEGIN_EXCLUDE_LIST double RngStream_RandU01(RngStream) double U01(RngStream) END_EXCLUDE_LIST

The select file should be placed in <path-to-GTC>/src/mpi.

Then build GTC as follows:

cd <path-to-GTC>/src/mpi

make ARCH=keeneland-opt-gnu-tau

If successful, the binary will be available at <path-to-GTC>/src/mpi.

Running GTC

Along with the source code 3 sets of simulation parameters were given: A, B, C (largest). Also a choice of m-cell size: 20 or 96 (96 requires significantly more memory). A, B with m-cell size 20 were used for these performance results.

Performance Results

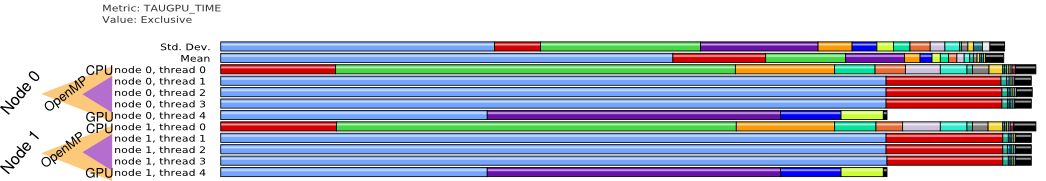

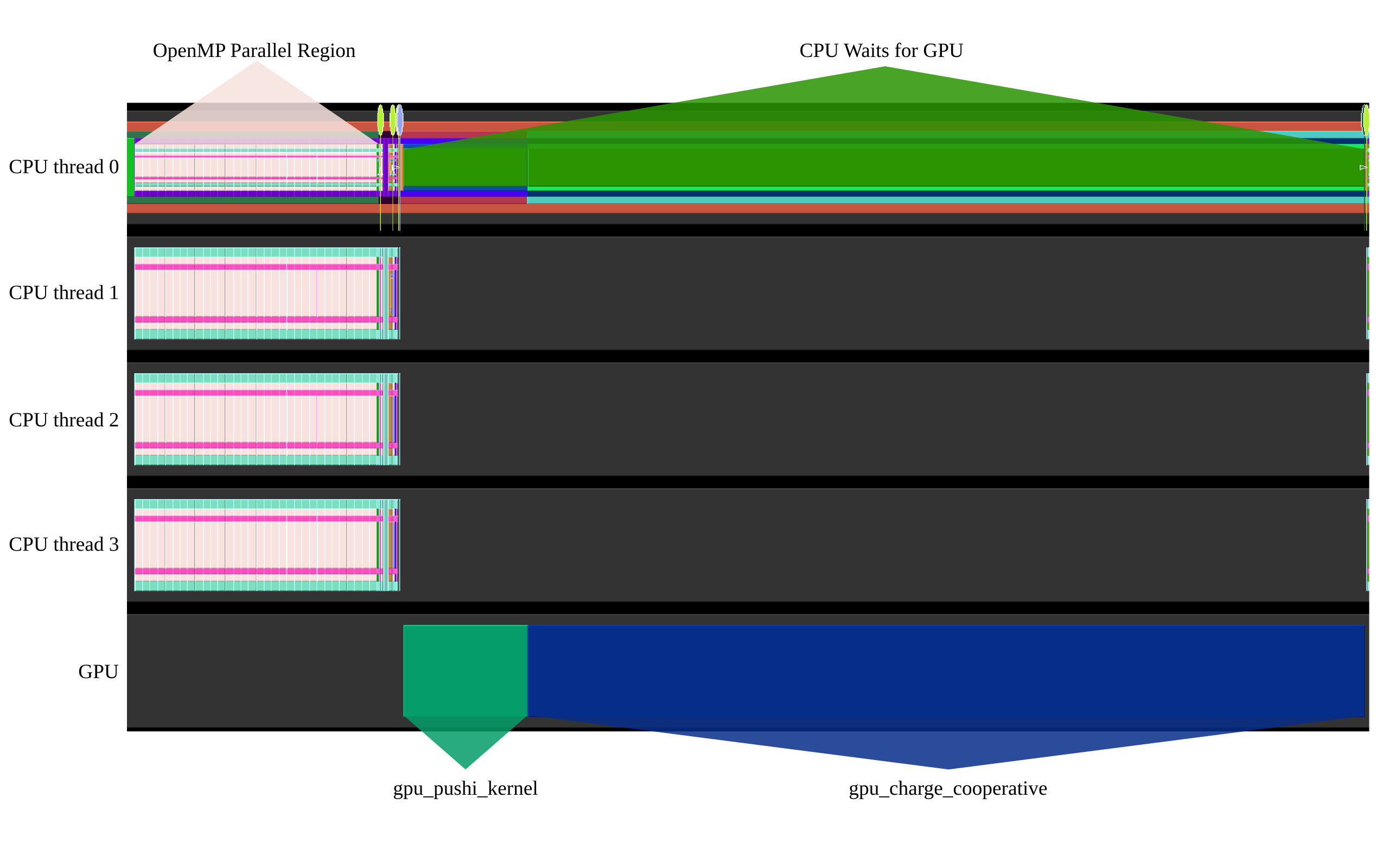

Here are some performance results that show the overall execution model:

Threads instrumented.

This show a trace of a single execution on one MPI process (Multiple nodes/gpus can be utilized as well, performance behavior is similar across each process).

This shows a representative a profile for the GPU.

GTC C 2013 version

Background

| Link | Code Version | Machine | Date |

|---|---|---|---|

| Presentation | vesta | February 2013 |

TAU configuration

./configure -cc=mpicc -c++=mpic++ -fortran=ibm -arch=bgq -BGQTIMERS -pdt=/soft/perftools/tau/pdtoolkit-3.18.1 -pdt_c++=xlC -pdtarchdir=bgq -bfd=download -iowrapper -mpi mpiinc=/bgsys/drivers/ppcfloor/comm/xl/include -mpilib=/bgsys/drivers/ppcfloor/comm/xl/lib -opari -useropt=-O2\ -g

Build

Use this File:Makefile.vesta-tau

export TAU_MAKEFILE=<path to TAU>/bgq/lib/Makefile.tau-bgqtimers-mpi-pdt-openmp-opari make ARCH=vesta-tau -j

Run with the attached T.txt (10 iterations instead of 100):

#!/bin/sh

PARTITION=`qstat -u $USER | grep running | awk '{print $6}'`

NPROCS=`qstat -u $USER | grep running | awk '{print $4}'`

runjob --env-all --block $PARTITION -n $NPROCS -p 1 : bench_gtc_vesta_tau T.txt 20 4